使用 Flux 窗口化和聚合数据

此页面记录了早期版本的 InfluxDB OSS。 InfluxDB OSS v2 是最新的稳定版本。 请参阅等效的 InfluxDB v2 文档: 使用 Flux 窗口化和聚合数据。

使用时间序列数据执行的常见操作是将数据分组到时间窗口中,或“窗口化”数据,然后将窗口化值聚合为新值。本指南将介绍如何使用 Flux 窗口化和聚合数据,并演示在此过程中数据的形状是如何变化的。

如果您刚开始使用 Flux 查询,请查看以下内容

以下示例深入介绍了窗口化和聚合数据所需的步骤。aggregateWindow() 函数为您执行这些操作,但了解在此过程中数据的形状如何变化有助于成功创建您期望的输出。

数据集

为了本指南的目的,定义一个变量来表示您的基本数据集。以下示例查询主机内存使用率。

dataSet = from(bucket: "db/rp")

|> range(start: -5m)

|> filter(fn: (r) =>

r._measurement == "mem" and

r._field == "used_percent"

)

|> drop(columns: ["host"])

此示例从返回的数据中删除 host 列,因为内存数据仅针对单个主机进行跟踪,并且它简化了输出表。如果监控多个主机上的内存,则不建议删除 host 列,但这并非强制要求。

dataSet 现在可以用来表示您的基本数据,它看起来类似于以下内容

Table: keys: [_start, _stop, _field, _measurement]

_start:time _stop:time _field:string _measurement:string _time:time _value:float

------------------------------ ------------------------------ ---------------------- ---------------------- ------------------------------ ----------------------------

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:50:00.000000000Z 71.11611366271973

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:50:10.000000000Z 67.39630699157715

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:50:20.000000000Z 64.16666507720947

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:50:30.000000000Z 64.19951915740967

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:50:40.000000000Z 64.2122745513916

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:50:50.000000000Z 64.22209739685059

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:51:00.000000000Z 64.6336555480957

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:51:10.000000000Z 64.16516304016113

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:51:20.000000000Z 64.18349742889404

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:51:30.000000000Z 64.20474052429199

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:51:40.000000000Z 68.65062713623047

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:51:50.000000000Z 67.20139980316162

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:52:00.000000000Z 70.9143877029419

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:52:10.000000000Z 64.14549350738525

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:52:20.000000000Z 64.15379047393799

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:52:30.000000000Z 64.1592264175415

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:52:40.000000000Z 64.18190002441406

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:52:50.000000000Z 64.28837776184082

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:53:00.000000000Z 64.29731845855713

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:53:10.000000000Z 64.36963081359863

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:53:20.000000000Z 64.37397003173828

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:53:30.000000000Z 64.44413661956787

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:53:40.000000000Z 64.42906856536865

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:53:50.000000000Z 64.44573402404785

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:54:00.000000000Z 64.48912620544434

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:54:10.000000000Z 64.49522972106934

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:54:20.000000000Z 64.48652744293213

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:54:30.000000000Z 64.49949741363525

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:54:40.000000000Z 64.4949197769165

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:54:50.000000000Z 64.49787616729736

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:55:00.000000000Z 64.49816226959229

窗口化数据

使用 window() 函数 根据时间范围对数据进行分组。与 window() 一起传递的最常见参数是 every,它定义了窗口之间的时间长度。还有其他参数可用,但在本示例中,将基本数据集窗口化为一分钟的窗口。

dataSet

|> window(every: 1m)

every 参数支持所有 有效的持续时间单位,包括日历月 (1mo) 和年 (1y)。

每个时间窗口都将在其自己的表中输出,其中包含窗口内的所有记录。

window() 输出表

Table: keys: [_start, _stop, _field, _measurement]

_start:time _stop:time _field:string _measurement:string _time:time _value:float

------------------------------ ------------------------------ ---------------------- ---------------------- ------------------------------ ----------------------------

2018-11-03T17:50:00.000000000Z 2018-11-03T17:51:00.000000000Z used_percent mem 2018-11-03T17:50:00.000000000Z 71.11611366271973

2018-11-03T17:50:00.000000000Z 2018-11-03T17:51:00.000000000Z used_percent mem 2018-11-03T17:50:10.000000000Z 67.39630699157715

2018-11-03T17:50:00.000000000Z 2018-11-03T17:51:00.000000000Z used_percent mem 2018-11-03T17:50:20.000000000Z 64.16666507720947

2018-11-03T17:50:00.000000000Z 2018-11-03T17:51:00.000000000Z used_percent mem 2018-11-03T17:50:30.000000000Z 64.19951915740967

2018-11-03T17:50:00.000000000Z 2018-11-03T17:51:00.000000000Z used_percent mem 2018-11-03T17:50:40.000000000Z 64.2122745513916

2018-11-03T17:50:00.000000000Z 2018-11-03T17:51:00.000000000Z used_percent mem 2018-11-03T17:50:50.000000000Z 64.22209739685059

Table: keys: [_start, _stop, _field, _measurement]

_start:time _stop:time _field:string _measurement:string _time:time _value:float

------------------------------ ------------------------------ ---------------------- ---------------------- ------------------------------ ----------------------------

2018-11-03T17:51:00.000000000Z 2018-11-03T17:52:00.000000000Z used_percent mem 2018-11-03T17:51:00.000000000Z 64.6336555480957

2018-11-03T17:51:00.000000000Z 2018-11-03T17:52:00.000000000Z used_percent mem 2018-11-03T17:51:10.000000000Z 64.16516304016113

2018-11-03T17:51:00.000000000Z 2018-11-03T17:52:00.000000000Z used_percent mem 2018-11-03T17:51:20.000000000Z 64.18349742889404

2018-11-03T17:51:00.000000000Z 2018-11-03T17:52:00.000000000Z used_percent mem 2018-11-03T17:51:30.000000000Z 64.20474052429199

2018-11-03T17:51:00.000000000Z 2018-11-03T17:52:00.000000000Z used_percent mem 2018-11-03T17:51:40.000000000Z 68.65062713623047

2018-11-03T17:51:00.000000000Z 2018-11-03T17:52:00.000000000Z used_percent mem 2018-11-03T17:51:50.000000000Z 67.20139980316162

Table: keys: [_start, _stop, _field, _measurement]

_start:time _stop:time _field:string _measurement:string _time:time _value:float

------------------------------ ------------------------------ ---------------------- ---------------------- ------------------------------ ----------------------------

2018-11-03T17:52:00.000000000Z 2018-11-03T17:53:00.000000000Z used_percent mem 2018-11-03T17:52:00.000000000Z 70.9143877029419

2018-11-03T17:52:00.000000000Z 2018-11-03T17:53:00.000000000Z used_percent mem 2018-11-03T17:52:10.000000000Z 64.14549350738525

2018-11-03T17:52:00.000000000Z 2018-11-03T17:53:00.000000000Z used_percent mem 2018-11-03T17:52:20.000000000Z 64.15379047393799

2018-11-03T17:52:00.000000000Z 2018-11-03T17:53:00.000000000Z used_percent mem 2018-11-03T17:52:30.000000000Z 64.1592264175415

2018-11-03T17:52:00.000000000Z 2018-11-03T17:53:00.000000000Z used_percent mem 2018-11-03T17:52:40.000000000Z 64.18190002441406

2018-11-03T17:52:00.000000000Z 2018-11-03T17:53:00.000000000Z used_percent mem 2018-11-03T17:52:50.000000000Z 64.28837776184082

Table: keys: [_start, _stop, _field, _measurement]

_start:time _stop:time _field:string _measurement:string _time:time _value:float

------------------------------ ------------------------------ ---------------------- ---------------------- ------------------------------ ----------------------------

2018-11-03T17:53:00.000000000Z 2018-11-03T17:54:00.000000000Z used_percent mem 2018-11-03T17:53:00.000000000Z 64.29731845855713

2018-11-03T17:53:00.000000000Z 2018-11-03T17:54:00.000000000Z used_percent mem 2018-11-03T17:53:10.000000000Z 64.36963081359863

2018-11-03T17:53:00.000000000Z 2018-11-03T17:54:00.000000000Z used_percent mem 2018-11-03T17:53:20.000000000Z 64.37397003173828

2018-11-03T17:53:00.000000000Z 2018-11-03T17:54:00.000000000Z used_percent mem 2018-11-03T17:53:30.000000000Z 64.44413661956787

2018-11-03T17:53:00.000000000Z 2018-11-03T17:54:00.000000000Z used_percent mem 2018-11-03T17:53:40.000000000Z 64.42906856536865

2018-11-03T17:53:00.000000000Z 2018-11-03T17:54:00.000000000Z used_percent mem 2018-11-03T17:53:50.000000000Z 64.44573402404785

Table: keys: [_start, _stop, _field, _measurement]

_start:time _stop:time _field:string _measurement:string _time:time _value:float

------------------------------ ------------------------------ ---------------------- ---------------------- ------------------------------ ----------------------------

2018-11-03T17:54:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:54:00.000000000Z 64.48912620544434

2018-11-03T17:54:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:54:10.000000000Z 64.49522972106934

2018-11-03T17:54:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:54:20.000000000Z 64.48652744293213

2018-11-03T17:54:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:54:30.000000000Z 64.49949741363525

2018-11-03T17:54:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:54:40.000000000Z 64.4949197769165

2018-11-03T17:54:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:54:50.000000000Z 64.49787616729736

Table: keys: [_start, _stop, _field, _measurement]

_start:time _stop:time _field:string _measurement:string _time:time _value:float

------------------------------ ------------------------------ ---------------------- ---------------------- ------------------------------ ----------------------------

2018-11-03T17:55:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:55:00.000000000Z 64.49816226959229

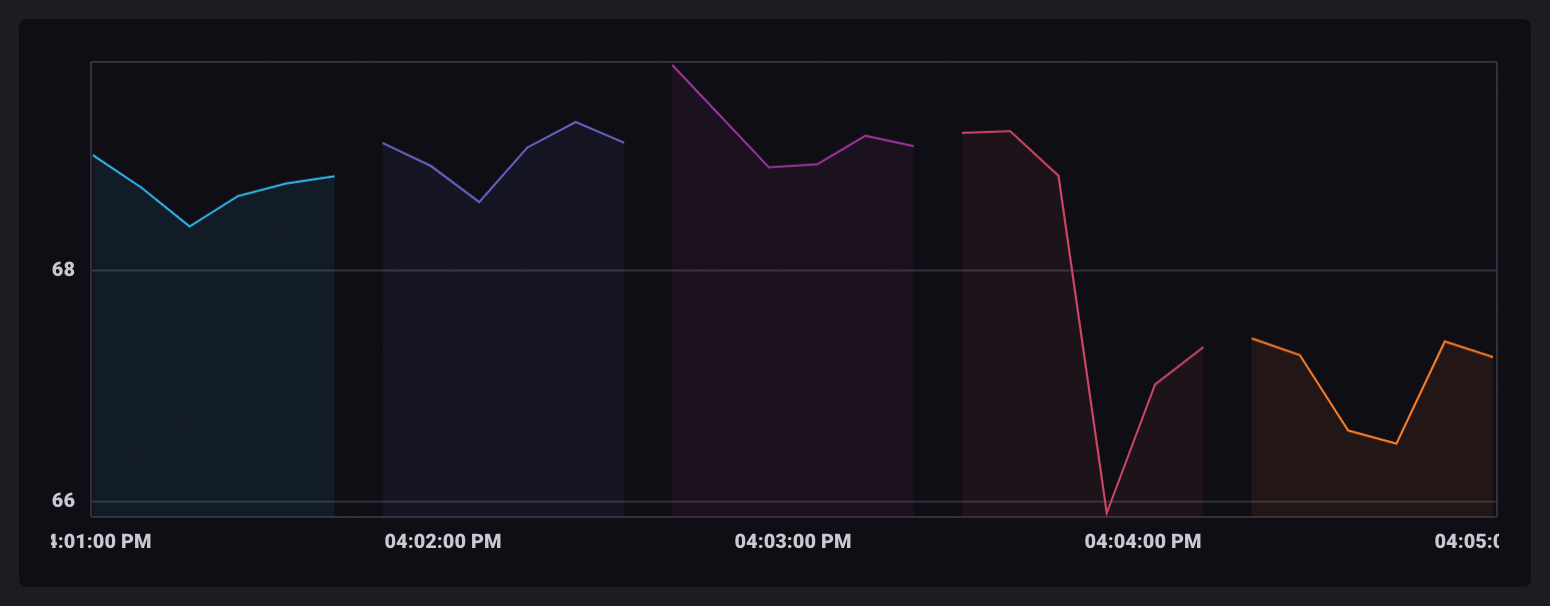

在 InfluxDB UI 中可视化时,每个窗口表都以不同的颜色显示。

聚合数据

聚合函数 获取表中所有行的值,并使用它们执行聚合操作。结果将作为单行表中的新值输出。

由于窗口化数据被拆分为单独的表,因此聚合操作会针对每个表单独运行,并输出仅包含聚合值的新表。

对于本示例,使用 mean() 函数 输出每个窗口的平均值

dataSet

|> window(every: 1m)

|> mean()

mean() 输出表

Table: keys: [_start, _stop, _field, _measurement]

_start:time _stop:time _field:string _measurement:string _value:float

------------------------------ ------------------------------ ---------------------- ---------------------- ----------------------------

2018-11-03T17:50:00.000000000Z 2018-11-03T17:51:00.000000000Z used_percent mem 65.88549613952637

Table: keys: [_start, _stop, _field, _measurement]

_start:time _stop:time _field:string _measurement:string _value:float

------------------------------ ------------------------------ ---------------------- ---------------------- ----------------------------

2018-11-03T17:51:00.000000000Z 2018-11-03T17:52:00.000000000Z used_percent mem 65.50651391347249

Table: keys: [_start, _stop, _field, _measurement]

_start:time _stop:time _field:string _measurement:string _value:float

------------------------------ ------------------------------ ---------------------- ---------------------- ----------------------------

2018-11-03T17:52:00.000000000Z 2018-11-03T17:53:00.000000000Z used_percent mem 65.30719598134358

Table: keys: [_start, _stop, _field, _measurement]

_start:time _stop:time _field:string _measurement:string _value:float

------------------------------ ------------------------------ ---------------------- ---------------------- ----------------------------

2018-11-03T17:53:00.000000000Z 2018-11-03T17:54:00.000000000Z used_percent mem 64.39330975214641

Table: keys: [_start, _stop, _field, _measurement]

_start:time _stop:time _field:string _measurement:string _value:float

------------------------------ ------------------------------ ---------------------- ---------------------- ----------------------------

2018-11-03T17:54:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 64.49386278788249

Table: keys: [_start, _stop, _field, _measurement]

_start:time _stop:time _field:string _measurement:string _value:float

------------------------------ ------------------------------ ---------------------- ---------------------- ----------------------------

2018-11-03T17:55:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 64.49816226959229

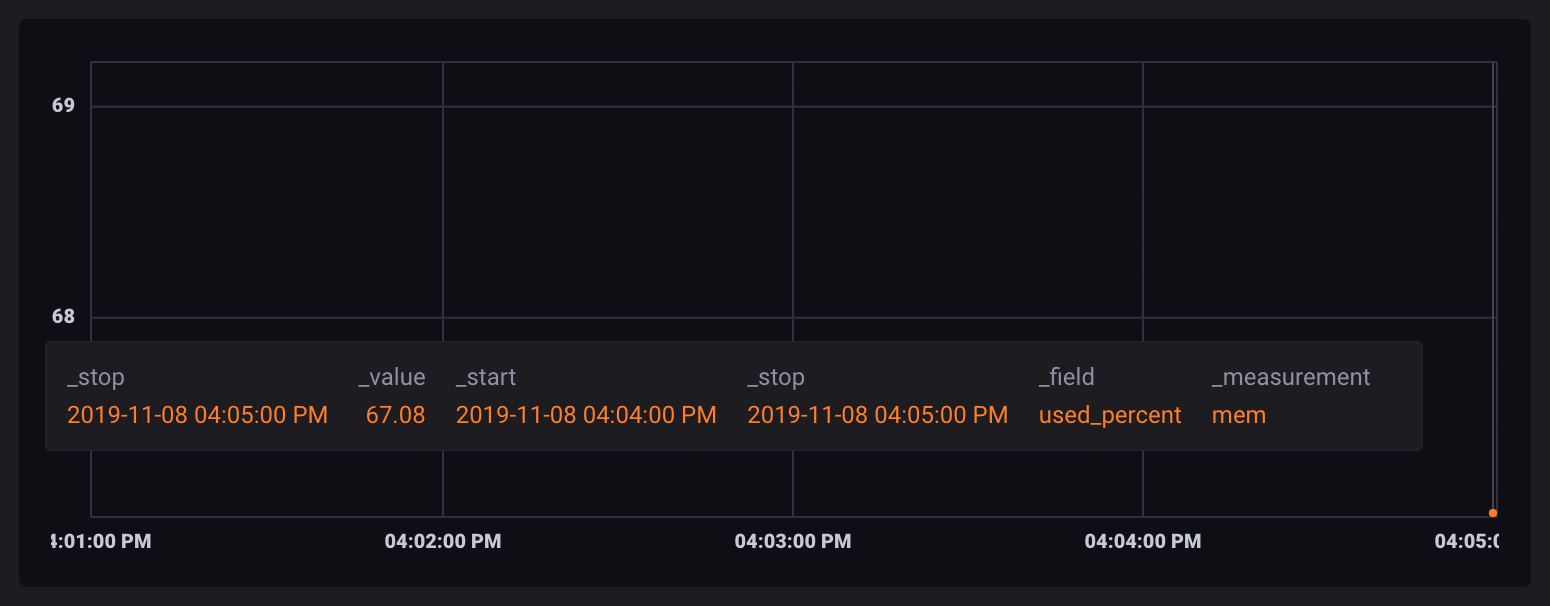

由于每个数据点都包含在其自己的表中,因此在可视化时,它们显示为单个、不连接的点。

重新创建时间列

请注意 聚合输出表 中没有 _time 列。 因为每个表中的记录被聚合在一起,所以它们的时间戳不再适用,并且该列从组键和表中删除。

另请注意,_start 和 _stop 列仍然存在。这些列表示时间窗口的下限和上限。

许多 Flux 函数依赖于 _time 列。要在聚合函数之后进一步处理您的数据,您需要重新添加 _time。使用 duplicate() 函数 将 _start 或 _stop 列复制为新的 _time 列。

dataSet

|> window(every: 1m)

|> mean()

|> duplicate(column: "_stop", as: "_time")

duplicate() 输出表

Table: keys: [_start, _stop, _field, _measurement]

_start:time _stop:time _field:string _measurement:string _time:time _value:float

------------------------------ ------------------------------ ---------------------- ---------------------- ------------------------------ ----------------------------

2018-11-03T17:50:00.000000000Z 2018-11-03T17:51:00.000000000Z used_percent mem 2018-11-03T17:51:00.000000000Z 65.88549613952637

Table: keys: [_start, _stop, _field, _measurement]

_start:time _stop:time _field:string _measurement:string _time:time _value:float

------------------------------ ------------------------------ ---------------------- ---------------------- ------------------------------ ----------------------------

2018-11-03T17:51:00.000000000Z 2018-11-03T17:52:00.000000000Z used_percent mem 2018-11-03T17:52:00.000000000Z 65.50651391347249

Table: keys: [_start, _stop, _field, _measurement]

_start:time _stop:time _field:string _measurement:string _time:time _value:float

------------------------------ ------------------------------ ---------------------- ---------------------- ------------------------------ ----------------------------

2018-11-03T17:52:00.000000000Z 2018-11-03T17:53:00.000000000Z used_percent mem 2018-11-03T17:53:00.000000000Z 65.30719598134358

Table: keys: [_start, _stop, _field, _measurement]

_start:time _stop:time _field:string _measurement:string _time:time _value:float

------------------------------ ------------------------------ ---------------------- ---------------------- ------------------------------ ----------------------------

2018-11-03T17:53:00.000000000Z 2018-11-03T17:54:00.000000000Z used_percent mem 2018-11-03T17:54:00.000000000Z 64.39330975214641

Table: keys: [_start, _stop, _field, _measurement]

_start:time _stop:time _field:string _measurement:string _time:time _value:float

------------------------------ ------------------------------ ---------------------- ---------------------- ------------------------------ ----------------------------

2018-11-03T17:54:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:55:00.000000000Z 64.49386278788249

Table: keys: [_start, _stop, _field, _measurement]

_start:time _stop:time _field:string _measurement:string _time:time _value:float

------------------------------ ------------------------------ ---------------------- ---------------------- ------------------------------ ----------------------------

2018-11-03T17:55:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:55:00.000000000Z 64.49816226959229

“取消窗口化”聚合表

通常,将聚合值保存在单独的表中不是您期望的数据格式。使用 window() 函数将您的数据“取消窗口化”为单个无限 (inf) 窗口。

dataSet

|> window(every: 1m)

|> mean()

|> duplicate(column: "_stop", as: "_time")

|> window(every: inf)

窗口化需要 _time 列,这就是为什么在聚合后需要重新创建 _time 列。

取消窗口化输出表

Table: keys: [_start, _stop, _field, _measurement]

_start:time _stop:time _field:string _measurement:string _time:time _value:float

------------------------------ ------------------------------ ---------------------- ---------------------- ------------------------------ ----------------------------

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:51:00.000000000Z 65.88549613952637

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:52:00.000000000Z 65.50651391347249

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:53:00.000000000Z 65.30719598134358

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:54:00.000000000Z 64.39330975214641

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:55:00.000000000Z 64.49386278788249

2018-11-03T17:50:00.000000000Z 2018-11-03T17:55:00.000000000Z used_percent mem 2018-11-03T17:55:00.000000000Z 64.49816226959229

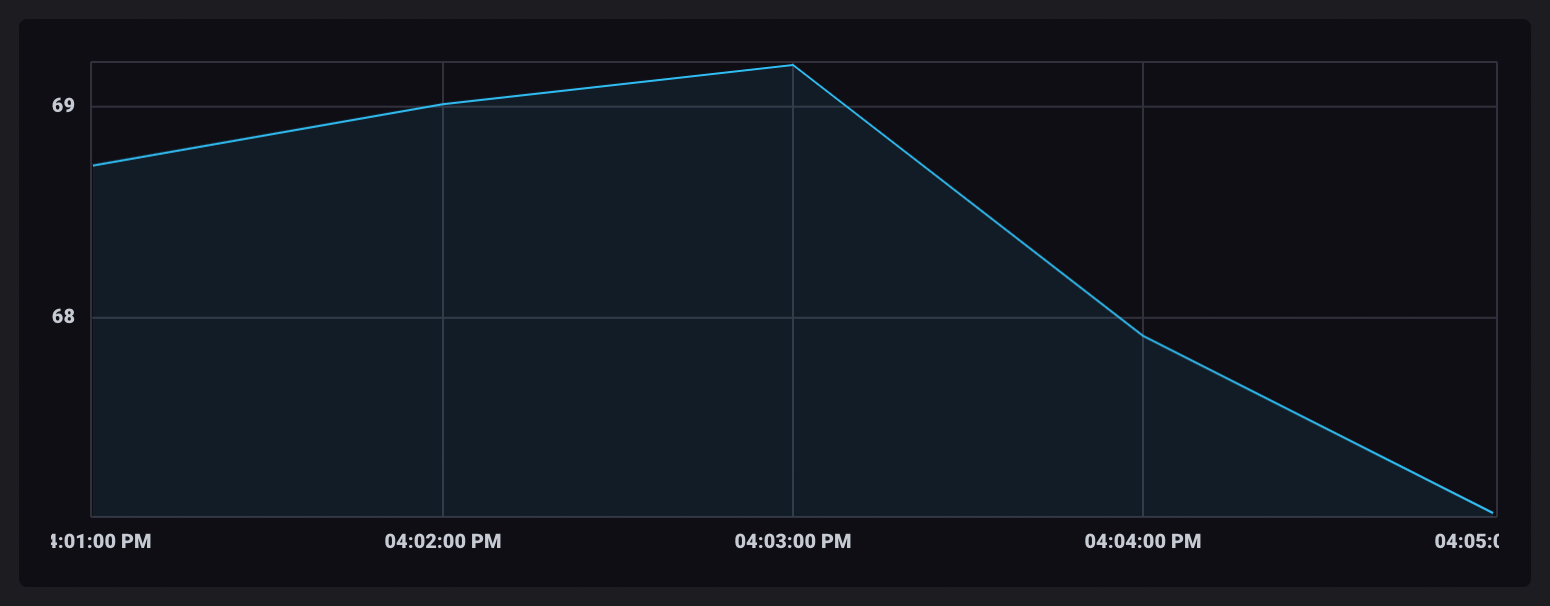

当聚合值在单个表中时,可视化中的数据点将连接起来。

总结

您现在已经创建了一个窗口化和聚合数据的 Flux 查询。本指南中概述的数据转换过程应适用于所有聚合操作。

Flux 还提供了 aggregateWindow() 函数,该函数为您执行所有这些单独的函数。

以下 Flux 查询将返回相同的结果

aggregateWindow 函数

dataSet

|> aggregateWindow(every: 1m, fn: mean)

此页是否对您有帮助?

感谢您的反馈!